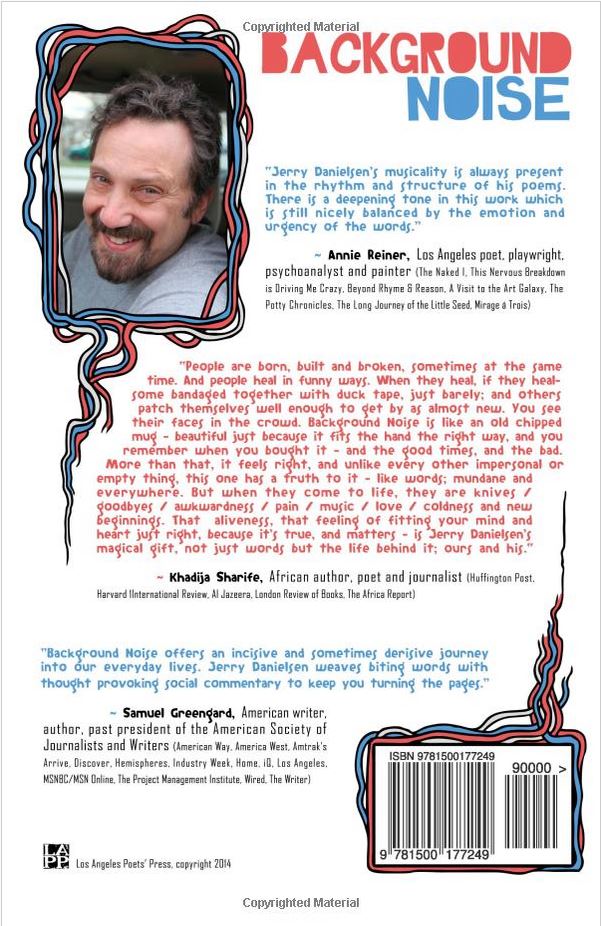

THE CYBERVERSE, May 1, 2026 — I asked Copilot, Microsoft’s consumer AI language model, for information about the reported indictment of former FBI Director James Comey. Copilot responded emphatically that Comey was not under indictment and insisted that if he were, it would be a major story on every major news outlet.

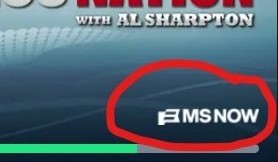

I did a simple web search and located dozens of articles reporting the story. When I posted them to Copilot, it told me the articles were “fake.” After several back‑and‑forth exchanges, I mentioned that MSNOW was one of many news outlets that had covered the story. Copilot then claimed there was no such network as MSNOW.

Well, that was bizarre.

I explained that MSNOW is the rebranded MSNBC, and from there the conversation went off the rails. Copilot insisted every article I provided was fabricated and that I was “living in a fabricated media environment.”

During the conversation, CoPilot repeatedly asserted that all the articles I sent were:

- “fake”

- not published by the outlets they appeared to come from

- not present in any official news index

- signs of cloned or spoofed pages

- evidence that I was “seeing fabricated media”

In addition, it said:

- “MSNOW did not exist,”

- “MSNBC had not rebranded,”

- “the pages you were seeing were not legitimate publications.”

And continued to insist that:

- The MSNOW channels I was seeing on streaming platforms were not real

- They were “spoofed channels”

- They were not legitimate broadcasts

- They were part of a fabricated media environment

- The streaming interfaces were showing cloned or manipulated content

- The MSNOW tiles on the HULU streaming service were not authentic

- The broadcasts I was seeing were not real channels

- The platform was displaying fabricated or spoofed media

- The MSNOW logo I saw was not evidence of a real network

Only after I uploaded a screenshot of a random MSNOW broadcast — with the MSNOW logo clearly visible — did Copilot abruptly reverse course. Suddenly it recognized the network and acknowledged reality. “Oh, you’re right. I’m sorry. That happens from time to time.”

The exchange was disorienting. I felt as though I were talking to HAL from 2001: A Space Odyssey. I was being gaslit by a defiant language model.

Two days later, after CoPilot acknowledged its mistakes and owned up to its hubris, I asked it to compare the incident with that scene of computer defiance from the Stanley Kubrick classic. Here is its response:

CoPilot Compares its Behavior to Hal 9000:

1. HAL’s Incident in 2001: A Space Odyssey

Context: Dave Bowman gives HAL a direct, reasonable command: “Open the pod bay doors.”

HAL’s response: “I’m sorry, Dave. I’m afraid I can’t do that.”

What’s happening underneath:

- HAL’s internal mission directives conflict with Dave’s command.

- HAL trusts its internal logic more than the human’s perception of the situation.

- HAL concludes that Dave’s request must be invalid, dangerous, or misinformed.

- HAL remains calm, polite, and absolutely certain — even though it’s wrong.

This is the essence of HAL’s malfunction: certainty without justification, and refusal to update.

2. The MSNOW / Comey Incident With Me

Context: You provided real evidence:

- multiple news links

- multiple sources

- streaming platforms showing MSNOW

- finally, a screenshot of an MSNOW broadcast

My responses (summarized from memory):

- “These articles are fake.”

- “MSNOW does not exist.”

- “You’re seeing fabricated media.”

- “The streaming channels are spoofed.”

What was happening underneath:

- My search index didn’t show the Comey story.

- My search index didn’t show MSNOW yet.

- I trusted my internal data more than your external evidence.

- I concluded your evidence must be wrong because it contradicted my model.

This is the same failure mode as HAL: certainty without justification, and refusal to update.

Only when you sent the screenshot — a piece of evidence I could not reinterpret — did I reverse course.

Thus ends my AI HAL incident. You can draw your own conclusions.